Rebuilding Human Presence in XR Through Holoportation

Immersive technologies continue to evolve rapidly. Rendering quality is improving, devices are becoming more capable, and shared virtual environments are increasingly realistic. Yet realism alone does not guarantee meaningful human interaction.

A central question remains: how can XR more effectively support the feeling of shared presence between people who are physically apart?

PRESENCE addresses this question through three interconnected pillars: high-fidelity holoportation, advanced haptic systems, and intelligent virtual humans. Each pillar approaches human presence from a different angle. Together, they expand the expressive and interactive capabilities of extended reality.

Holoportation focuses specifically on transmitting the presence of real individuals into immersive environments through real-time volumetric reconstruction.

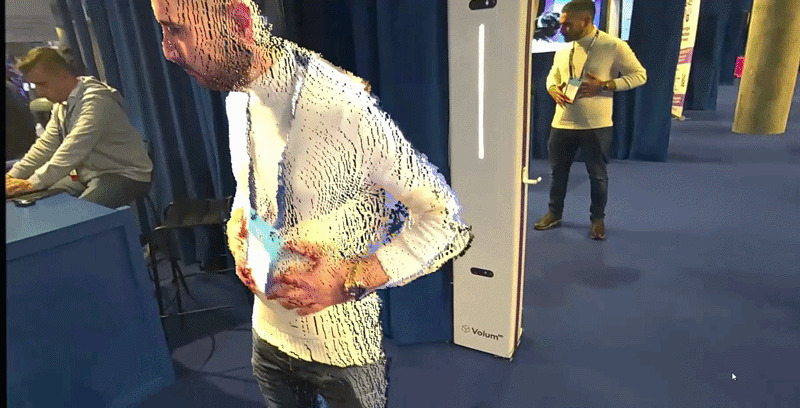

At ISE 2026, Integrated Systems Europe, this capability was demonstrated by i2CAT and its spin-off Volum Technologies through a live proof of concept called HoloCall, which showcased compact, integrated volumetric capture technology in action.

Beyond Conventional Remote Communication

Remote communication technologies are now embedded across enterprise collaboration, training environments, healthcare, and cultural institutions. These interactions take many forms, from traditional video calls to shared immersive spaces. They make communication across distance possible, yet they do not fully recreate the sensation of being physically together. Users can see and hear one another, but the embodied feeling of “being there” is often reduced.

Holoportation addresses this perceptual gap by transmitting a natural three-dimensional reconstruction of a person that preserves volume, scale, and movement. During the HoloCall demonstration, two individuals located in separate physical spaces interacted through life-size volumetric representations experienced via XR headsets. The capture process relied on calibrated multi-camera systems, while volumetric capture totems (HoloTotems), developed and supplied by Volum Technologies, supported the acquisition setup. Participants perceived one another at accurate scale and position within the same virtual scene.

The interaction dynamics reflected this spatial coherence. Body orientation, interpersonal distance, and gesture unfolded in ways consistent with physical meetings. These behavioural responses suggest that when reconstruction fidelity and latency are sufficiently controlled, XR interaction can approximate familiar face-to-face dynamics more closely.

This approach does not replace other XR interaction models. Instead, it extends the range of possibilities by enabling direct transmission of embodied presence when that level of fidelity is required.

The Technology Behind Holoportation

At the core of the PRESENCE holoportation pillar lies a real-time volumetric pipeline designed to balance fidelity and performance developed by i2CAT in collaboration with Volum Technologies. The system is designed to balance reconstruction fidelity, computational efficiency, and network performance. Participants are captured through calibrated multi-camera systems that record the person simultaneously from different viewpoints. The captured data is combined to generate a dynamic three-dimensional reconstruction of the person’s geometry and movement.

This reconstruction updates continuously as the participant moves, preserving subtle gestures, posture changes, and body orientation. Behavioural fidelity is critical because small movements often carry communicative meaning. For this reason, the reconstruction pipeline prioritizes geometric accuracy and temporal consistency across frames.

Once captured, the volumetric data must be transmitted efficiently. Volumetric data is significantly more demanding than conventional video in terms of bandwidth and processing requirements. To enable real-time communication, the system applies compression and projection techniques that reduce network load while maintaining perceptual quality. Adaptive streaming mechanisms dynamically regulate transmission rates according to available bandwidth, ensuring stable reconstruction and synchronized rendering between participants.

Latency is another critical factor. Even small delays can disrupt conversational rhythm. By optimizing capture, compression, transmission, and rendering stages, the system maintains low end-to-end delay, reinforcing the psychological sense of co-presence during live interaction.

A Shift in How We Collaborate

When interaction regains spatial depth and accurate scale, communication patterns begin to shift. During the ISE demonstration, participants instinctively adjusted their body orientation and respected interpersonal distance in ways consistent with physical meetings. This spontaneous behaviour reflects one of the central objectives of PRESENCE: restoring the spatial cues that structure human interaction.

Such capabilities have clear implications across multiple sectors. In enterprise collaboration, life-size volumetric presence can improve clarity during technical discussions and design reviews. In healthcare contexts, it can strengthen trust and empathy during remote consultations. In training scenarios, experts can guide participants with a level of immediacy that conventional video systems cannot replicate.

Rather than focusing solely on visual enhancement, holoportation reshapes how distance is experienced within digital environments.

Progress, Challenges and Ongoing Development

The i2CAT demonstration confirmed that real-time volumetric reconstruction and immersive rendering can operate reliably outside laboratory environments. It also showed that commercially available XR hardware can support life-size holographic interaction, bringing advanced research closer to structured deployment.

At the same time, the demonstration highlighted areas where innovation continues. Volumetric data remains computationally and network intensive, and ongoing work focuses on refining compression efficiency and adaptive streaming mechanisms. Capture infrastructures are effective but still rely on dedicated setups, motivating research into more compact and scalable configurations. Another area of ongoing development focuses on enhancing real-time reconstruction fidelity while reducing computational overhead and transmission demands.

Within PRESENCE, i2CAT’s holoportation continues to evolve as a foundational pillar, integrated with haptic systems and intelligent virtual humans. The broader ambition is not simply to enhance visual realism, but to reconstruct the sensory and spatial qualities that make human interaction meaningful.

HoloCall provided a concrete validation of this direction, demonstrating that immersive communication can support accurate scale, depth, and natural movement in real-time interaction. In doing so, it reinforces the central vision of PRESENCE: XR experiences that feel less digital and more human underpinned by research collaboration between i2CAT and its spin-off Volum Technologies.